AI-Fiber: AI-Powered Electrospinning

2025-08-29 · 3 min read

In the field of scientific research, we often face a dilemma: we have a variety of powerful but isolated analytical tools. We might use ImageJ to analyze electron microscopy images, Python scripts to process data, and yet another environment to build predictive models. This entire workflow is fragmented, with data being manually transferred between different software, a process that is inefficient and prone to error.

Is it possible to integrate these complex tools into a single, intelligent entry point, allowing researchers to complete the entire process from image analysis to performance prediction using only the most natural form of interaction—language conversation?

This is the goal of my graduate design project, AI-Fiber—an intelligent electrospinning research assistant based on a Large Language Model (LLM).

Open Source Project:https://github.com/breeeak/ai-fiber

Live Demo:https://aifiber.tech

Core Idea: The "LLM + Tools" Golden Paradigm

While today's Large Language Models (LLMs) have extraordinary capabilities in language understanding and generation, they still face risks of "hallucination" and inaccuracy when performing scientific calculations that demand high precision and reliability. Directly asking an LLM to analyze a microscopy image and calculate fiber diameters often yields untrustworthy results.

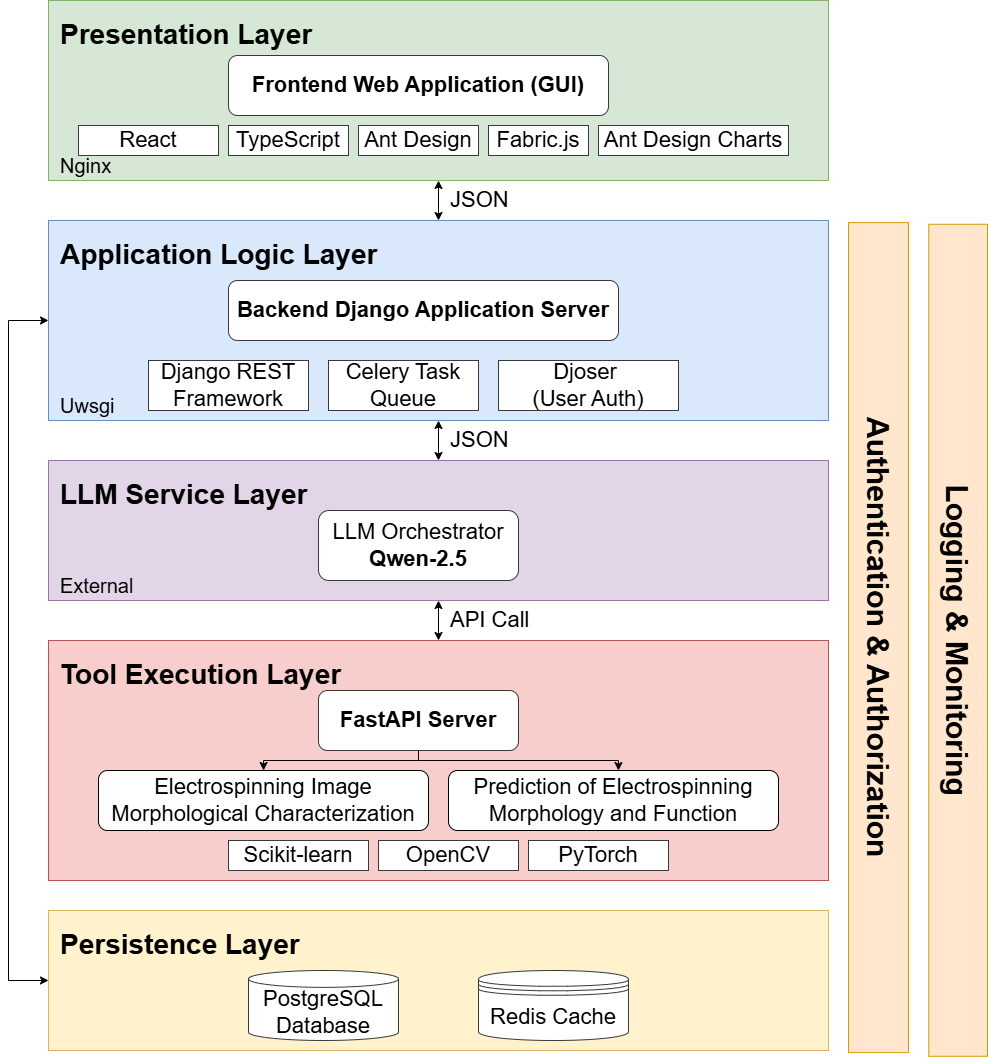

Therefore, AI-Fiber's core architecture adopts the "LLM + Tools" paradigm.

- The LLM acts as the "Intelligent Brain": It is responsible for understanding the user's natural language commands, decomposing tasks, and deciding which specialized tool to call for execution.

- Specialized Algorithms act as the "Efficient Hands and Feet": All the models developed in the previous chapters—from literature information extraction (SpinSci-Ex) and scale recognition (YOLO-OCR) to fiber segmentation (SAM-Fiber) and performance prediction (LightGBM)—have been encapsulated as independent "tools" callable via API.

This way, the LLM does what it does best (understanding and orchestrating), while the specific scientific computations are handled by rigorously validated, specialized models. This achieves a perfect combination of intuitive interaction and scientific rigor.

Functionality Showcase: Two Typical Research Scenarios

How does AI-Fiber assist researchers in their daily work? Let's look at two real-world case studies.

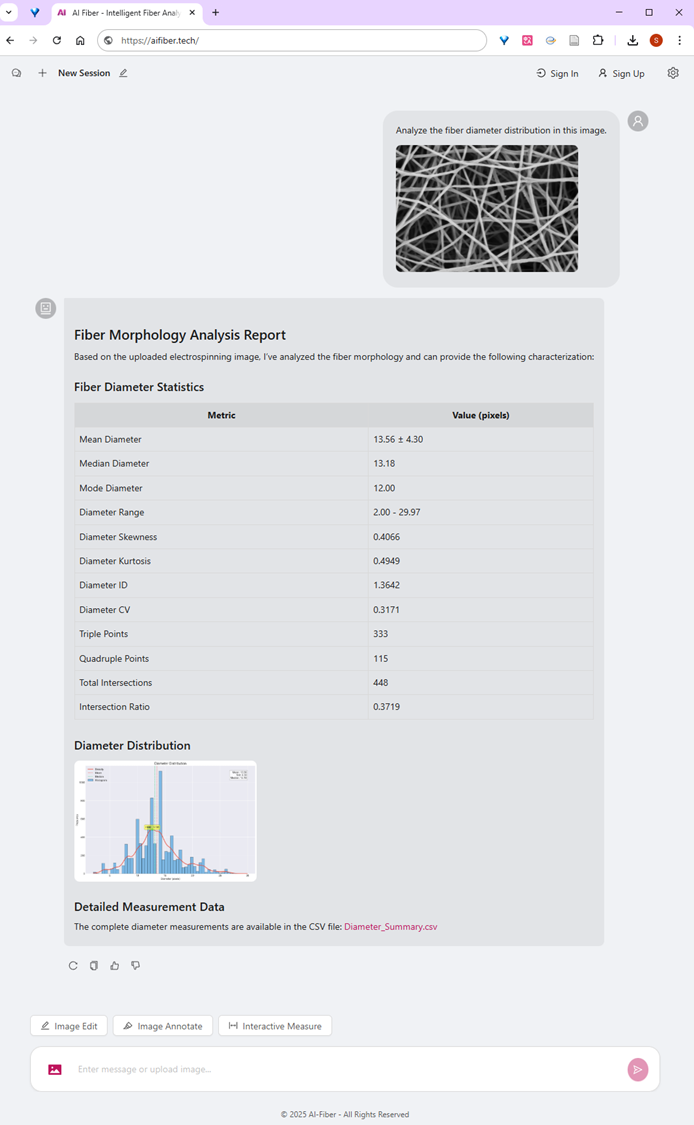

Case Study 1: Quantitative Analysis of Microscopy Images with a Single Sentence

In the past, analyzing the fiber diameter distribution from an SEM image required a series of tedious steps: opening the software, calibrating the scale, segmenting the image, adjusting thresholds, measuring, and statistical analysis.

Now, the user simply uploads an image and says to AI-Fiber:

"Analyze the fiber diameter distribution in this image."

AI-Fiber's LLM brain immediately understands this command and automatically calls the back-end "Image Morphological Characterization" tool. After the analysis is complete, the system directly generates a report with text and graphics in the chat interface, including statistical data (mean, variance, etc.) and a histogram, and provides a link to download the raw data.

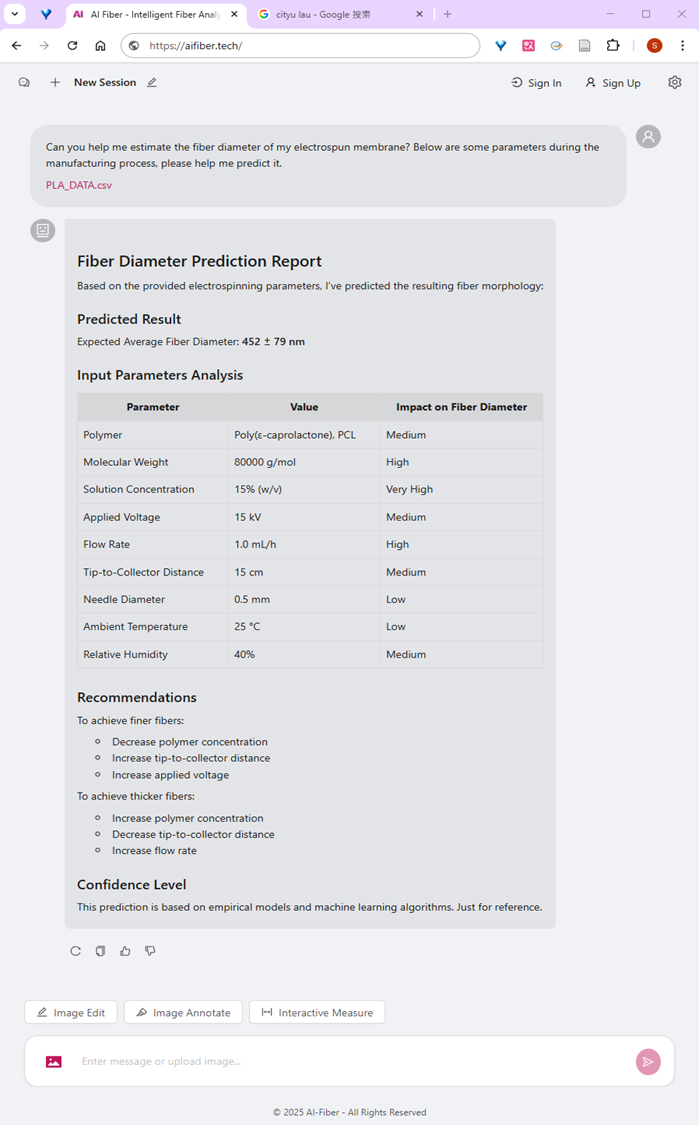

Case Study 2: From "Prediction" to "Guidance," Becoming Your Intelligent Experiment Partner

Beyond analyzing existing data, AI-Fiber can also assist researchers with experimental design.

A user can input a set of process parameters and ask:

"Predict the fiber diameter and tensile strength for these parameters."

The system calls the back-end prediction model and provides a precise prediction with an uncertainty interval. Furthermore, it analyzes the impact of each parameter on the results and offers specific optimization advice, such as "

To achieve finer fibers, you can try decreasing the polymer concentration or increasing the voltage". This demonstrates the system's potential to move from "forward prediction" toward "inverse design."

Conclusion

The AI-Fiber project is more than just a simple stacking of AI models. By using an LLM as an intelligent orchestrator, it truly creates a unified, efficient, and low-barrier research platform. It validates the immense potential of the "LLM + Tools" paradigm in a vertical scientific domain and provides a solid foundation and a practical blueprint for building more powerful "AI Scientists" or "automated laboratories" in the future.