SAM-Fiber: When a Large Vision Model Learns to Deconstruct Complex Nanofiber Networks

2025-08-28 · 2 min read

Project Background: A Persistent Challenge in Nanofiber Analysis

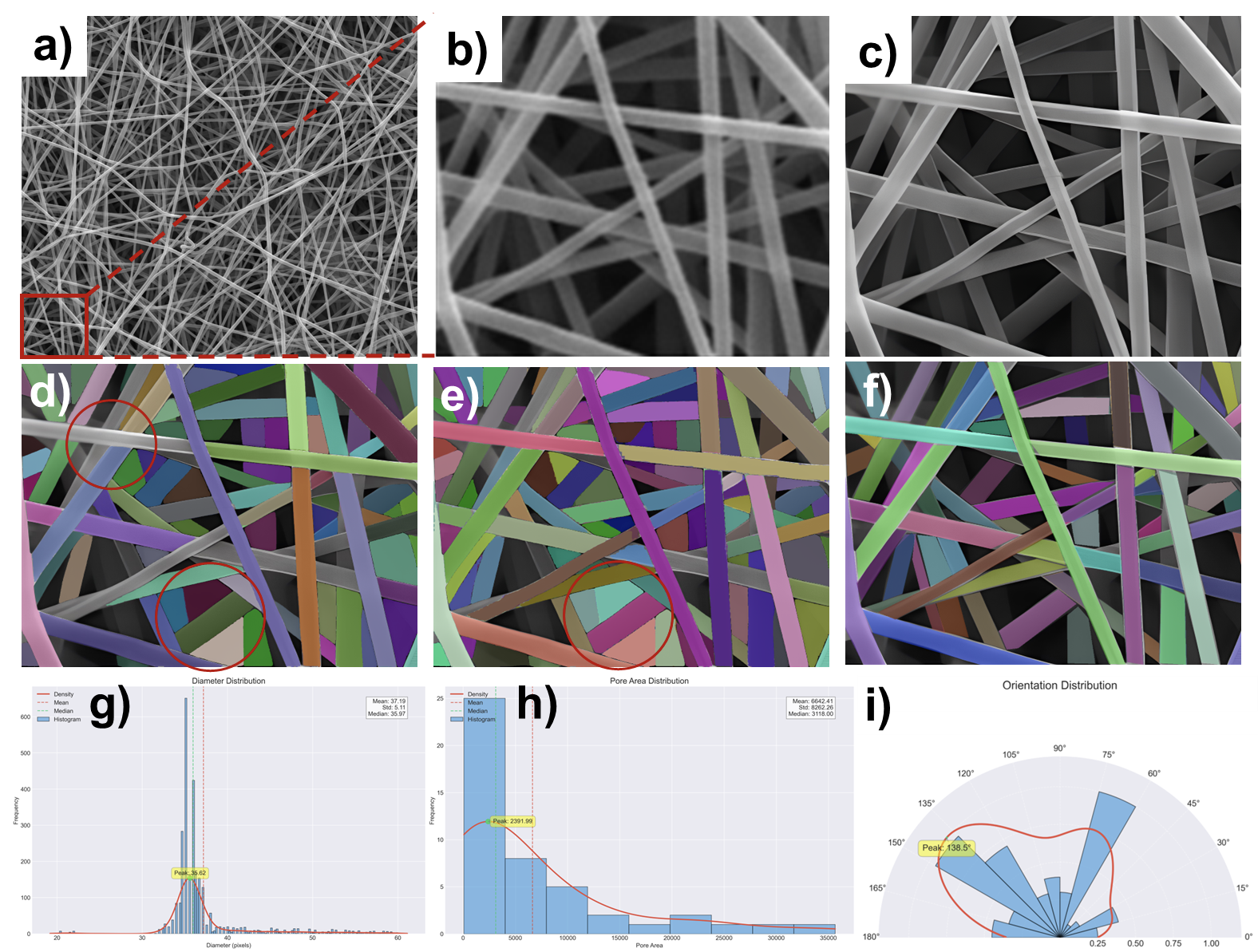

In advanced materials science, the microscopic morphology of electrospun nanofibers—such as fiber diameter, orientation, and porosity—directly dictates their macroscopic performance. However, accurately analyzing these features is exceptionally difficult. Traditional manual or semi-automated methods (like ImageJ plugins) are not only inefficient and subjective but also often fail when faced with dense, overlapping fiber networks. While standard deep learning models (e.g., U-Net) improve objectivity, they typically require extensive, pixel-level manual annotation, which is costly and struggles with fine fiber details.

Technical Solution: SAM-Fiber, an Intelligent Framework Born for Fiber Analysis

To overcome these challenges, this project designed and implemented the SAM-Fiber (Segment Anything Model for Fiber) framework. Instead of simply applying a general-purpose model, it transforms the powerful vision foundation model (SAM2) into a specialized fiber analysis tool through a series of innovations.

The framework includes three main innovations:

- Detail-Enhancement Preprocessing: Before segmentation, CLAHE is used to enhance local contrast, and a fine-tuned Real-ESRGAN model performs super-resolution. This makes fine, previously blurry fibers clear and visible, laying the foundation for accurate segmentation.

- Innovative Skeleton-based Self-Prompting Module (SPGen-S): The core challenge of applying SAM/SAM2 models is providing effective prompts for dense, linear fiber networks. To solve this, the project created the unique SPGen-S module. It automatically extracts the "skeleton" of the fibers from the preprocessed image and generates sparse Point Prompts along these skeletons. This highly efficient process "guides" the SAM2 model on where to perform segmentation.

- Efficient Model Fine-Tuning Strategy: To conserve computational resources and prevent overfitting, the project only fine-tunes the "Mask Decoder" of the SAM2 model, while keeping its powerful image encoder frozen. This strategy allows the model to quickly learn how to interpret the skeleton-based prompts and translate them into precise fiber masks.

Results and Discussion

SAM-Fiber achieved outstanding segmentation performance on real-world electrospun microscopy images.

- In terms of quantitative metrics, SAM-Fiber reached an mDice of 0.87 and an mIoU of 0.81, significantly outperforming traditional ImageJ methods as well as standard U-Net and adapted UN-SAM models.

- More importantly, the high-quality segmentation results can be directly converted into accurate morphological parameter analysis.

In summary, the SAM-Fiber project successfully adapted a general-purpose vision foundation model to solve a specific and challenging scientific image analysis problem through the clever injection of domain knowledge (like skeleton-based self-prompting) and a series of engineering optimizations. It provides a powerful new tool for the automated, high-throughput, and high-precision characterization of nanofibers.